On July 4, 2022, Eric Vigoda from the UC Santa Barbara gave a talk at the Discrete Math Seminar on determining computational phase transitions from the fast mixing to the NP-hardness for approximate counting/sampling by Markov Chain Monte Carlo (MCMC) algorithms. The title of his talk was “Computational phase transition and MCMC algorithms“.

Eric Vigoda, Computational phase transition and MCMC algorithms

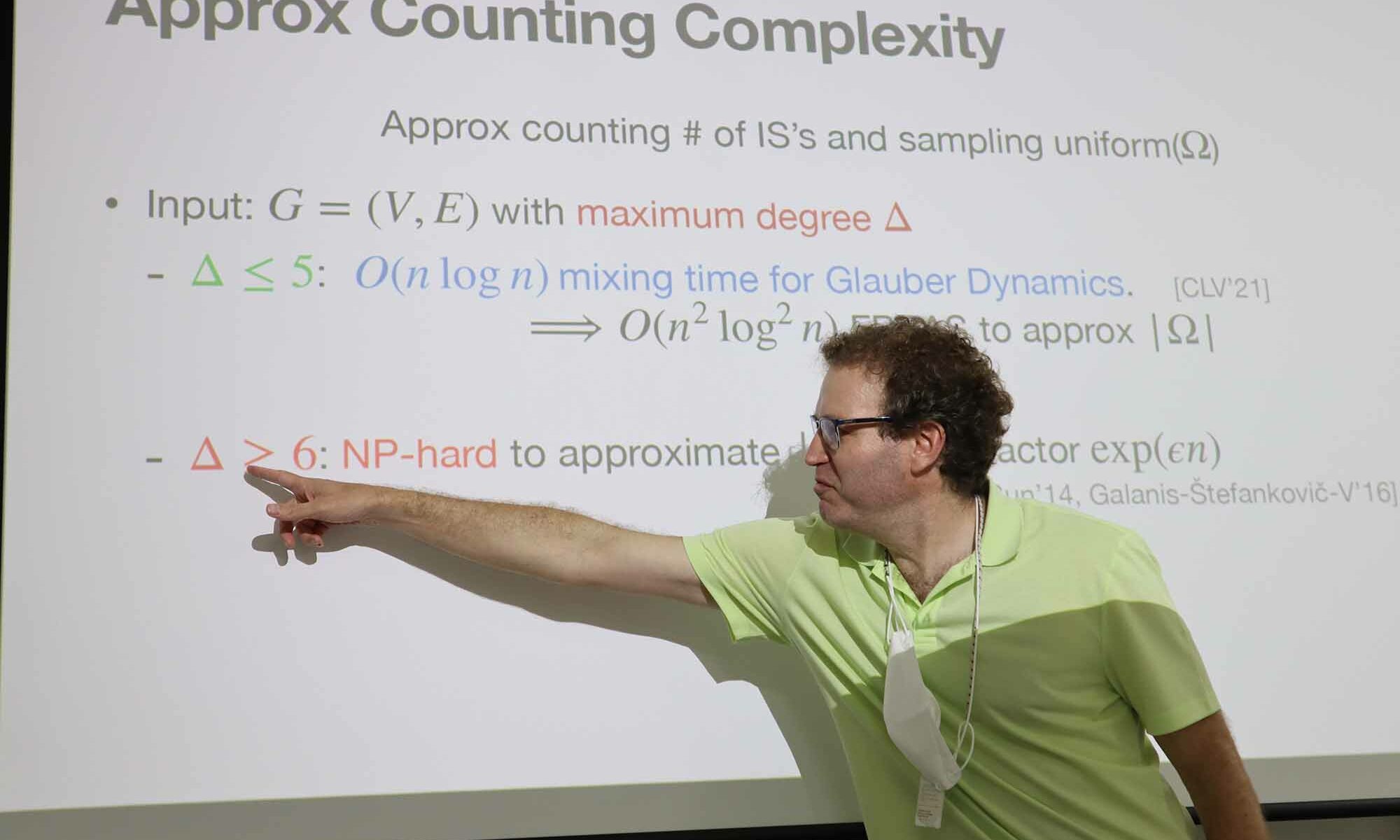

This talk will highlight recent results establishing a beautiful computational phase transition for approximate counting/sampling in (binary) undirected graphical models (such as the Ising model or on weighted independent sets). The computational problem is to sample from the equilibrium distribution of the model or equivalently approximate the corresponding normalizing factor known as the partition function. We show that when correlations die off on the infinite D-regular tree then the Gibbs sampler has optimal mixing time of $O(n \log n)$ on any graph of maximum degree D, whereas when the correlations persist (in the limit) then the sampling/counting problem are NP-hard to approximate. The Gibbs sampler is a simple Markov Chain Monte Carlo (MCMC) algorithm. Key to these mixing results are a new technique known as spectral independence which considers the pairwise influence of vertices. We show that spectral independence implies an optimal convergence rate for a variety of MCMC algorithms.